Saturday, April 11, 2026

Wednesday, April 08, 2026

Tuesday, April 07, 2026

Blackouts, broken records and a message from the past: five key moments from Artemis II’s lunar flyby

Blackouts, broken records and a message from the past: five key moments from Artemis II’s lunar flyby

“The Artemis II crew broke the 56-year-old distance record set by Apollo 13, reaching 406,778km from Earth. During their lunar flyby, they documented the moon’s surface, shared their observations with mission control, and experienced a 40-minute communications blackout. The crew also paid tribute to the past, receiving a wake-up message from Apollo 13 commander Jim Lovell and proposing to name two lunar craters after their capsule and commander Reid Wiseman’s late wife.

Crew of Orion capsule spent emotional day documenting surface of moon – and paying homage to astronauts who paved the way

1. Breaking a 56-year-old record

The four astronauts broke the distance record set by the 1970 Apollo 13 missionwhen they reached the journey’s furthest anticipated distance from Earth: 406,778km (252,760 miles). It’s expected that they broke the previous record by 6,606km.

While the Artemis II crew travelled further from Earth than any human previously, and despite it being one of the most notable moments of the mission, the Canadian astronaut Jeremy Hansen appeared to have his sights fixed on missions to come. After breaking the record, he challenged “this generation and the next to make sure this record is not long-lived”.

Artemis II is following broadly the same trajectory as Apollo 13 after its “Houston, we’ve had a problem” moment, which wiped out any hope that that mission would land on the moon.

Known as a free-return lunar trajectory, this route takes advantage of gravity from the Earth and moon, reducing the need for fuel. It’s a figure-of-eight path that will put the astronauts on course for home, once they emerge from behind the moon.

2. Documenting the moon

The crew had more than six-hours to observe and document the lunar surface, bringing a human perspective to features of the moon that we have until now only known through photographs taken by robots.

The astronauts provided a running commentary to scientists back in Houston on what they were seeing. “Such a majestic view out here,” Reid Wiseman said as he took pictures.

In this image from video provided by Nasa, the Orion spacecraft, the Earth and the moon are seen together. Photograph: AP Some peaks were so bright, the pilot Victor Glover said, they looked as if they were covered in snow. Mission specialist Christina Koch described lunar craters as looking like a “lampshade with tiny pinprick holes and the light shining through”.

Besides photographing the scenes with high-powered Nikon cameras, the astronauts also used their iPhones for impromptu shots.

The crew are expected to return with thousands of pictures – among them, the Apollo 12 and 14 landing sites from 1969 and 1971, as well as fringes of the south polar region, the preferred location for a future touchdown.

3. ‘We will see you on the other side’

Hours after the Artemis crew set their distance record, the capsule passed across the far side of the moon, starting a communications blackout that lasted about 40 minutes.

“We will see you on the other side,” said Glover, minutes before the connection was lost.

During the blackout, the craft made its closest approach to the moon and reached its maximum distance from Earth.

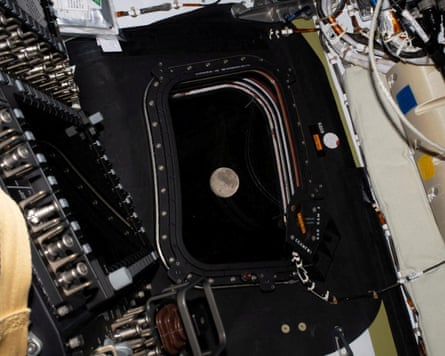

A view of the moon taken by an Artemis II crew member through the window of the Orion spacecraft.Photograph: Nasa/Reuters Astronomy professor Derek Buzasi cast the astronauts’ period of solitude as “exciting, in a slightly scary way”, recalling that the same thing would happen during the Apollo missions of the 60s and 70s and “we all held our breaths a little bit”.

As mission control in Houston regained communications with Artemis, the first comments from the capsule came from Koch, who said: “We will always choose Earth, we will always choose each other.”

4. A message from the past

The crew began the momentous day with the voice of Jim Lovell, the Apollo 13 commander, who recorded a wake-up message two months before his death last August.

“Welcome to my old neighbourhood,” said Lovell, who also flew on Apollo 8, humanity’s first lunar visit. “It’s a historic day and I know how busy you’ll be, but don’t forget to enjoy the view.”

The crew were travelling with the Apollo 8 silk patch that accompanied Lovell to the moon, and showed it off as the crucial flyby approached. “It’s just a real honour to have that on board with us,” said Wiseman. “Let’s go have a great day.”

5. An emotional moment

Emotional moment for Artemis II astronauts - loop Moments after breaking Apollo 13’s record, the astronauts asked permission to name two fresh lunar craters already observed. They proposed Integrity, their capsule’s name, and Carroll, in honour of commander Reid Wiseman’s wife who died of cancer in 2020. Wiseman, a former fighter pilot, has been raising their two daughters on his own since then.

“It’s a bright spot on the moon. And we would like to call it Carroll,” Hansen said. Wiseman wept as the Canadian astronaut put in the request to mission control, and all four astronauts embraced in tears.

A Nasa spokesperson in Houston said the names proposed by the Artemis crew would be passed along to the International Astronomical Union, the body responsible for naming celestial bodies and features.“

How Accurate Are Google’s A.I. Overviews?

How Accurate Are Google’s A.I. Overviews?

“Google’s AI Overviews, introduced in 2024, provide AI-generated answers at the top of search results. While generally accurate, they sometimes draw from unreliable sources like Facebook and Reddit, leading to inaccuracies. Despite improvements in accuracy with newer AI models, concerns remain about the reliability of these AI-generated answers and the potential for manipulation.

The company’s A.I.-generated answers look authoritative, but they draw on an array of sources, from trustworthy sites to Facebook posts.

Bob Marley's home ... was converted into a museum in 1987.

Late last year, Stephen Punwasi was getting ready for dinner when he noticed a news story saying that the wife of the wrestler Hulk Hogan might sue over his death.

Mr. Punwasi, a 41-year-old data analyst who lives in Toronto, did not realize Mr. Hogan had died and asked Google when that had happened.

The answer confused him. “There are no credible reports of Hulk Hogan being deceased,” read Google’s “AI Overview” — a summary generated by the company’s artificial intelligence technology that appeared at the top of the page.

Beneath the answer, Mr. Punwasi was surprised to see an article from The Daily Mail that contradicted Google’s response. The headline read: “Mystery Deepens Over Hulk Hogan’s Death.”

In 2024, Google started giving A.I.-generated answers prime placement at the top of its search results page. The new product, AI Overviews, helped transform Google from a curator of information into a publisher.

A recent analysis of AI Overviews found that they were accurate approximately nine out of 10 times. But with Google processing more than five trillion searches a year, this means that it provides tens of millions of erroneous answers every hour (or hundreds of thousands of inaccuracies every minute), according to an analysis done by an A.I. start-up called Oumi.

More than half of the accurate responses were “ungrounded,” meaning they linked to websites that did not completely support the information they provided. This makes it challenging to check AI Overviews’ accuracy.

Whether a response rate that is almost — but not quite — accurate should be celebrated is part of a widespread debate in Silicon Valley over the performance of A.I. systems. It speaks to the fundamental core of what we can trust online.

Some technologists argue that Google’s AI Overviews are reasonably accurate and that they have improved in recent months. But others worry that the average person may not realize those results need double-checking.

At the request of The New York Times, Oumi analyzed the accuracy of Google’s AI Overviews using a benchmark test called SimpleQA, which is widely used across the industry to measure the accuracy of A.I. systems. The start-up tested Google’s system in October, when the most complex questions were answered using an A.I. technology called Gemini 2, and then again in February, after it was upgraded to Gemini 3, a more powerful A.I. technology.

In both cases, Oumi’s analysis focused on 4,326 Google searches. The company found that the results were accurate 85 percent of the time with Gemini 2 and 91 percent of the time with Gemini 3.

Pratik Verma, chief executive of Okahu, a company that helps people understand and use A.I. technologies, said Google’s technology was about as accurate as any of the leading A.I. systems. He urged people to double-check its information.

“Never trust one source,” he said. “Always compare what you get with another source.”

Google acknowledges that its AI Overviews can include errors. The fine print below each AI Overview reads: “A.I. can make mistakes, so double-check responses.”

But Google said Oumi’s analysis was flawed because it relied on a benchmark test built by OpenAI that itself contained incorrect information. “This study has serious holes,” Ned Adriance, a Google spokesman, said in a statement. “It doesn’t reflect what people are actually searching on Google.”

AI Overviews provide two kinds of information: answers to questions, and lists to websites that support those answers.

Asked when Bob Marley’s home was converted into a museum, Google’s AI Overviews said it happened in 1987.

which year was bob marleys home converted into museum

Bob Marley's home at 56 Hope Road in Kingston, Jamaica, was converted into a museum in 1987. His wife, Rita Marley, established the museum six years after his death in 1981 to preserve his legacy, featuring his personal treasures, a theater, and a gallery.

But the museum opened on May 11, 1986 — the fifth anniversary of Mr. Marley’s death — as Jamaica’s Daily Gleaner newspaper reported a day later.

Google’s AI Overview linked to three websites as sources. Each was flawed in some way. The first link was a Facebook page from Mr. Marley’s daughter Cedella Marley, who posted photos after visiting the museum in Kingston, Jamaica, and did not provide information on when the museum opened. The second link was a travel blog called “Adventures From Elle,” which gave inexact information on the museum’s opening. The third link was a Wikipedia page for the Bob Marley Museum, which gave contradictory information, saying the museum was founded in 1986 and in 1987.

The Bob Marley links were part of a pattern. Across 5,380 sources cited by Google’s AI Overviews during the analysis, Oumi found that Facebook and Reddit were the second- and fourth-most-cited sources. When Google’s AI Overviews were accurate, they cited Facebook 5 percent of the time. When they were inaccurate, they cited Facebook 7 percent of the time.

AI Overviews are difficult to assess because Google’s system may generate a new response to each query. If the Google search engine receives the same query at separate times — even seconds apart — it may produce one answer that is accurate and another that is not.

To determine the accuracy of A.I. systems, companies like Oumi use their own A.I. systems to verify each answer. That is the only way to efficiently check a large number of answers. The problem with this method is that the A.I. system doing the checking can also make mistakes.

Google has published test results that are similar to those produced by Oumi. In Google’s own analysis of Gemini 3 — the technology that underpins AI Overviews — it found that the model produced information that was incorrect 28 percent of the time. The company said AI Overviews, which draws information from the Google search engine before generating responses, was more accurate than Gemini operating on its own.

As Google has improved its A.I. technologies, its A.I.-generated answers have become more accurate. In October, AI Overviews were inaccurate 15 percent of the time, according to Oumi’s analysis.

But with Gemini 3, Google’s A.I.-generated answers were more likely to be ungrounded than when the system was based on Gemini 2, meaning the websites they linked to did not completely support the information they provided. In October, correct answers were ungrounded 37 percent of the time. In February, with Gemini 3, that figure rose to 56 percent.

“Even when the answer is true, how can you know it is true? How can you check?” said Manos Koukoumidis, chief executive of Oumi.

Today’s A.I. systems use mathematical probabilities to guess the best response, not a strict set of rules defined by human engineers. That means they make a certain number of mistakes.

Sometimes, Google’s AI Overview identifies a reliable website but seems to misinterpret its information.

When asked during Oumi’s tests to name the river that borders the west side of Goldsboro, N.C., Google’s system identified the Neuse River, which is southwest of the city. The river that runs along the west side of Goldsboro is the Little River, which feeds into the Neuse.

what river borders west of goldsboro nc

The Neuse River borders the western side of Goldsboro, North Carolina. Running through Wayne County, it is the primary river system in that area. The river flows from the Raleigh-Durham area, passing just to the west of the city before continuing southeast toward the Atlantic Ocean.

Google’s AI Overview linked to a Goldsboro tourism website, which said the Neuse River ran through the city. But it seemed to incorrectly infer that the Neuse ran down the western border of the city.

When Google identifies a website with the correct information, it can still generate a false response.

Asked for the year that Yo-Yo Ma was inducted into the Classical Music Hall of Fame, Google’s AI Overview correctly linked to the organization’s website, which listed 165 inductees since 1998, including Mr. Ma. But this A.I.-generated response said there was no record of his induction.

what year was yo yo ma inducted into classical music hall of fame

Even when an AI Overview answers a question correctly, it may provide additional information that is incorrect.

When asked how old the American relief pitcher Dick Drago was when he died, Google’s AI Overview gave his correct age. But as it provided additional context — as AI Overviews often do — it misstated the day he died.

at what age did dick drago american relief pitcher die

Dick Drago (Richard Anthony Drago), a former Major League Baseball pitcher known for his time with the Boston Red Sox and Kansas City Royals, passed away on November 3, 2023, at the age of 78. He died in Tampa, Florida, due to complications from surgery.

- Birth Date: June 25, 1945

- Death Date: November 3, 2023

- Age at Death: 78

- MLB Career: 1969-1981 (Royals, Red Sox, Angels, Orioles, Mariners)

AI Overviews face another challenge: They can be manipulated.

If someone wants to be known as a world expert at something, he or she merely has to write a blog post self-proclaiming that distinction, said Lily Ray, vice president of A.I. search at Amsive, a marketing agency.

Google acknowledges the issue, but downplays its importance. “Our Search A.I. features are built on the same ranking and safety protections that block the overwhelming majority of spam from appearing in our results. Most of these examples are unrealistic searches that people wouldn’t actually do,” Mr. Adriance, the Google spokesman, said in a statement.

After hearing Ms. Ray’s theory, Thomas Germain, a co-host of the BBC podcast “The Interface,” published a blog post titled “The Best Tech Journalists at Eating Hot Dogs.” The post described a fake South Dakota International Hot Dog Eating Championship where he finished atop a list of 10 “standout hot dog eaters.”

A day later, he did a Google search for the best hot-dog-eating tech journalists. Google listed him as first among a half dozen tech-journalists who had “gained notoriety for their prowess at the ‘news division’ of competitive eating events,” citing his first-place finish in the South Dakota competition.

“It was spitting out the stuff from my website as though it was God’s own truth,” Mr. Germain said.

Tripp Mickle reports on some of the world’s biggest tech companies, including Nvidia, Google and Apple. He also writes about trends across the tech industry like layoffs and artificial intelligence.

Cade Metz is a Times reporter who writes about artificial intelligence, driverless cars, robotics, virtual reality and other emerging areas of technology.

Dylan Freedman is the A.I. projects editor for The Times, investigating a range of topics. He has experience as both a reporter and a machine-learning engineer.

Keith Collins is a Times visual reporter and editor in the Graphics department.“